Jpeg’s libjpeg.so library will be replaced later in these instructions with libjpeg-turbo’s one for the duration of the build. Pip uninstall -y pillow pil jpeg libtiff libjpeg-turboīoth conda packages jpeg and libjpeg-turbo contain a libjpeg.so library. Here is the tl dr version to install Pillow-SIMD w/ libjpeg-turbo and w/o TIFF support:Ĭonda uninstall -y -force pillow pil jpeg libtiff libjpeg-turbo

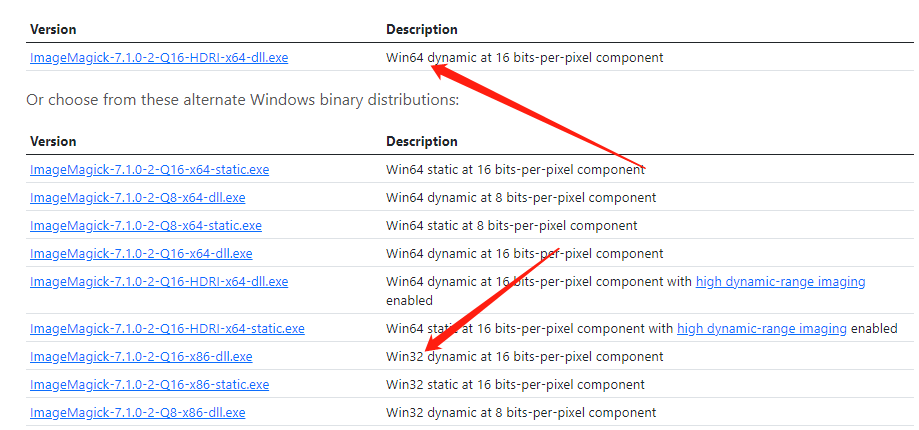

This section explains how to install Pillow-SIMD w/ libjpeg-turbo (but the very tricky libjpeg-turbo part of it is identically relevant to Pillow - just replace pillow-simd with pillow in the code below). The Pillow-SIMD release cycle is made so that its versions are identical Pillow’s and the functionality is identical, except Pillow-SIMD speeds up some of them (e.g. That’s the main reason it’s a fork of Pillow and not backported to Pillow - the latter is committed to support many other platforms/architectures where SIMD-support is lacking. Pillow-SIMD currently works only on the x86 platform. This is not parallel processing (think threads), but a single instruction processing, supported by CPU, via data-level parallelism, similar to matrix operations on GPU, which also use SIMD. Pillow-SIMD is highly optimized for common image manipulation instructions using Single Instruction, Multiple Data ( SIMD approach, where multiple data points are processed simultaneously. This library in its turn is 4-6 times faster than Pillow, according to the same benchmarks. Relatively recently, Pillow-SIMD was born to be a drop-in replacement for Pillow. Then, Pillow forked PIL as a drop-in replacement and according to its benchmarks it is significantly faster than ImageMagick, OpenCV, IPP and other fast image processing libraries (on identical hardware/platform). Backgroundįirst, there was PIL (Python Image Library). There is a faster Pillow version out there. To learn how to rebuild Pillow-SIMD or Pillow with libjpeg-turbo see the Pillow-SIMD entry. Some packages that rely on this library will be able to start using it right away, most will need to be recompiled against the replacement library.įastai uses Pillow for its image processing and you have to rebuild Pillow to take advantage of libjpeg-turbo.

When you install it system-wide it provides a drop-in replacement for the libjpeg library. libjpeg-turbo is generally 2-6x as fast as libjpeg, all else being equal. On x86 platforms it accelerates baseline JPEG compression and decompression and progressive JPEG compression. Libjpeg-turbo is a JPEG image codec that uses SIMD instructions (MMX, SSE2, AVX2, NEON, AltiVec).

This is a faster compression/decompression libjpeg drop-in replacement. If you need faster image resize, blur, alpha composition, alpha premultiplication, division by alpha, grayscale and other image manipulations you need to switch to Pillow-SIMD.Īt the moment this section is only relevant if you’re on the x86 platform. If you notice a bottleneck in JPEG decoding (decompression) it’s enough to switch to a much faster libjpeg-turbo, using the normal version of Pillow. Python -c "import fastai.utils _perf()"Ĭombined FP16/FP32 training can tremendously improve training speed and use less GPU RAM.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed